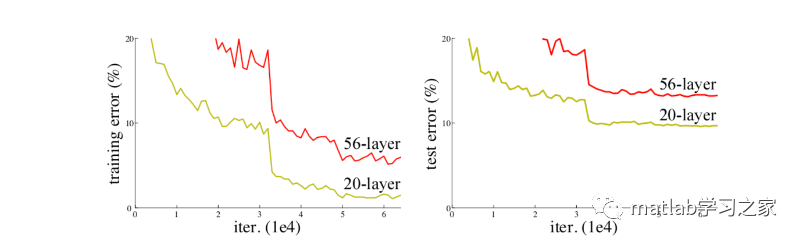

我们都知道在CNN网络中,输入的是图片的矩阵,也是最基本的特征,整个CNN网络就是一个信息提取的过程,从底层的特征逐渐抽取到高度抽象的特征,网络的层数越多也就意味这能够提取到的不同级别的抽象特征更加丰富,并且越深的网络提取的特征越抽象,就越具有语义信息。但神经网络越深真的越好吗?我们可以看下面一张图片,图中描述了不同深度的传统神经网络效果对比图,显然神经网络越深效果不一定好。

对于传统CNN网络,网络深度的增加,容易导致梯度消失和爆炸。针对梯度消失和爆炸的解决方法一般是正则初始化和中间的正则化层,但是这会导致另一个问题,退化问题,随着网络层数的增加,在训练集上的准确率却饱和甚至下降了。为此,残差神经网络应运而生。

一、算法原理

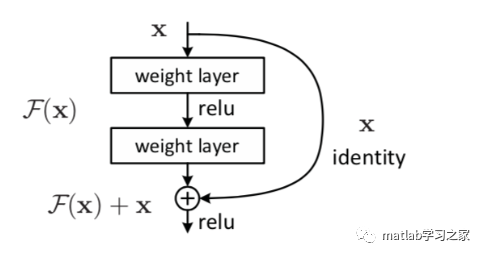

残差网络通过加入 shortcut connections,变得更加容易被优化。包含一个 shortcut connection 的几层网络被称为一个残差块(residual block),如下图所示。

普通的平原网络与深度残差网络的最大区别在于,深度残差网络有很多旁路的支线将输入直接连到后面的层,使得后面的层可以直接学习残差,这些支路就叫做shortcut。传统的卷积层或全连接层在信息传递时,或多或少会存在信息丢失、损耗等问题。ResNet 在某种程度上解决了这个问题,通过直接将输入信息绕道传到输出,保护信息的完整性,整个网络则只需要学习输入、输出差别的那一部分,简化学习目标和难度。

二、代码实战

构建19层ResNet网络,以负荷预测为例

%%

clc

clear

close all

load Train.mat

% load Test.mat

Train.weekend = dummyvar(Train.weekend);

Train.month = dummyvar(Train.month);

Train = movevars(Train,{'weekend','month'},'After','demandLag');

Train.ts = [];

Train(1,:) =[];

y = Train.demand;

x = Train{:,2:5};

[xnorm,xopt] = mapminmax(x',0,1);

[ynorm,yopt] = mapminmax(y',0,1);

xnorm = xnorm(:,1:1000);

ynorm = ynorm(1:1000);

k = 24; % 滞后长度

% 转换成2-D image

for i = 1:length(ynorm)-k

Train_xNorm{:,i} = xnorm(:,i:i+k-1);

Train_yNorm(i) = ynorm(i+k-1);

Train_y{i} = y(i+k-1);

end

Train_x = Train_xNorm';

ytest = Train.demand(1001:1170);

xtest = Train{1001:1170,2:5};

[xtestnorm] = mapminmax('apply', xtest',xopt);

[ytestnorm] = mapminmax('apply',ytest',yopt);

% xtestnorm = [xtestnorm; Train.weekend(1001:1170,:)'; Train.month(1001:1170,:)'];

xtest = xtest';

for i = 1:length(ytestnorm)-k

Test_xNorm{:,i} = xtestnorm(:,i:i+k-1);

Test_yNorm(i) = ytestnorm(i+k-1);

Test_y(i) = ytest(i+k-1);

end

Test_x = Test_xNorm';

x_train = table(Train_x,Train_y');

x_test = table(Test_x);

%% 训练集和验证集划分

% TrainSampleLength = length(Train_yNorm);

% validatasize = floor(TrainSampleLength * 0.1);

% Validata_xNorm = Train_xNorm(:,end - validatasize:end,:);

% Validata_yNorm = Train_yNorm(:,TrainSampleLength-validatasize:end);

% Validata_y = Train_y(TrainSampleLength-validatasize:end);

%

% Train_xNorm = Train_xNorm(:,1:end-validatasize,:);

% Train_yNorm = Train_yNorm(:,1:end-validatasize);

% Train_y = Train_y(1:end-validatasize);

%% 构建残差神经网络

lgraph = layerGraph();

tempLayers = [

imageInputLayer([4 24],"Name","imageinput")

convolution2dLayer([3 3],32,"Name","conv","Padding","same")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

batchNormalizationLayer("Name","batchnorm")

reluLayer("Name","relu")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

additionLayer(2,"Name","addition")

convolution2dLayer([3 3],32,"Name","conv_1","Padding","same")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

batchNormalizationLayer("Name","batchnorm_1")

reluLayer("Name","relu_1")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

additionLayer(2,"Name","addition_1")

convolution2dLayer([3 3],32,"Name","conv_2","Padding","same")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

batchNormalizationLayer("Name","batchnorm_2")

reluLayer("Name","relu_2")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

additionLayer(2,"Name","addition_2")

convolution2dLayer([3 3],32,"Name","conv_3","Padding","same")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

batchNormalizationLayer("Name","batchnorm_3")

reluLayer("Name","relu_3")];

lgraph = addLayers(lgraph,tempLayers);

tempLayers = [

additionLayer(2,"Name","addition_3")

fullyConnectedLayer(1,"Name","fc")

regressionLayer("Name","regressionoutput")];

lgraph = addLayers(lgraph,tempLayers);

% 清理辅助变量

clear tempLayers;

lgraph = connectLayers(lgraph,"conv","batchnorm");

lgraph = connectLayers(lgraph,"conv","addition/in2");

lgraph = connectLayers(lgraph,"relu","addition/in1");

lgraph = connectLayers(lgraph,"conv_1","batchnorm_1");

lgraph = connectLayers(lgraph,"conv_1","addition_1/in2");

lgraph = connectLayers(lgraph,"relu_1","addition_1/in1");

lgraph = connectLayers(lgraph,"conv_2","batchnorm_2");

lgraph = connectLayers(lgraph,"conv_2","addition_2/in2");

lgraph = connectLayers(lgraph,"relu_2","addition_2/in1");

lgraph = connectLayers(lgraph,"conv_3","batchnorm_3");

lgraph = connectLayers(lgraph,"conv_3","addition_3/in2");

lgraph = connectLayers(lgraph,"relu_3","addition_3/in1");

plot(lgraph);

analyzeNetwork(lgraph);

%% 设置网络参数

maxEpochs = 60;

miniBatchSize = 20;

options = trainingOptions('adam', ...

'MaxEpochs',maxEpochs, ...

'MiniBatchSize',miniBatchSize, ...

'InitialLearnRate',0.01, ...

'GradientThreshold',1, ...

'Shuffle','never', ...

'Plots','training-progress',...

'Verbose',0);

net = trainNetwork(x_train,lgraph ,options);

Predict_yNorm = predict(net,x_test);

Predict_y = double(Predict_yNorm)

plot(Test_y)

hold on

plot(Predict_y)

legend('真实值','预测值')

MATLAB残差神经网络设计

MATLAB残差神经网络设计