到目前为止,我们主要关注如何更新权重向量的优化算法,而不是更新权重向量的速率。尽管如此,调整学习率通常与实际算法一样重要。有几个方面需要考虑:

最明显的是学习率的大小很重要。如果它太大,优化就会发散,如果它太小,训练时间太长,或者我们最终会得到一个次优的结果。我们之前看到问题的条件编号很重要(例如,参见第 12.6 节了解详细信息)。直观地说,它是最不敏感方向的变化量与最敏感方向的变化量之比。

其次,衰减率同样重要。如果学习率仍然很大,我们可能最终会在最小值附近跳来跳去,因此无法达到最优。12.5 节 详细讨论了这一点,我们在12.4 节中分析了性能保证。简而言之,我们希望速率下降,但可能比O(t−12)这将是凸问题的不错选择。

另一个同样重要的方面是初始化。这既涉及参数的初始设置方式(详见 第 5.4 节),也涉及它们最初的演变方式。这在热身的绰号下进行,即我们最初开始朝着解决方案前进的速度。一开始的大步骤可能没有好处,特别是因为初始参数集是随机的。最初的更新方向也可能毫无意义。

最后,还有许多执行循环学习率调整的优化变体。这超出了本章的范围。我们建议读者查看 Izmailov等人的详细信息。( 2018 ),例如,如何通过对整个参数路径进行平均来获得更好的解决方案。

鉴于管理学习率需要很多细节,大多数深度学习框架都有自动处理这个问题的工具。在本章中,我们将回顾不同的调度对准确性的影响,并展示如何通过学习率调度器有效地管理它。

12.11.1。玩具问题

我们从一个玩具问题开始,这个问题足够简单,可以轻松计算,但又足够不平凡,可以说明一些关键方面。为此,我们选择了一个稍微现代化的 LeNet 版本(relu而不是 sigmoid激活,MaxPooling 而不是 AveragePooling)应用于 Fashion-MNIST。此外,我们混合网络以提高性能。由于大部分代码都是标准的,我们只介绍基础知识而不进行进一步的详细讨论。如有需要,请参阅第 7 节进行复习。

%matplotlib inline import math import torch from torch import nn from torch.optim import lr_scheduler from d2l import torch as d2l def net_fn(): model = nn.Sequential( nn.Conv2d(1, 6, kernel_size=5, padding=2), nn.ReLU(), nn.MaxPool2d(kernel_size=2, stride=2), nn.Conv2d(6, 16, kernel_size=5), nn.ReLU(), nn.MaxPool2d(kernel_size=2, stride=2), nn.Flatten(), nn.Linear(16 * 5 * 5, 120), nn.ReLU(), nn.Linear(120, 84), nn.ReLU(), nn.Linear(84, 10)) return model loss = nn.CrossEntropyLoss() device = d2l.try_gpu() batch_size = 256 train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size=batch_size) # The code is almost identical to `d2l.train_ch6` defined in the # lenet section of chapter convolutional neural networks def train(net, train_iter, test_iter, num_epochs, loss, trainer, device, scheduler=None): net.to(device) animator = d2l.Animator(xlabel='epoch', xlim=[0, num_epochs], legend=['train loss', 'train acc', 'test acc']) for epoch in range(num_epochs): metric = d2l.Accumulator(3) # train_loss, train_acc, num_examples for i, (X, y) in enumerate(train_iter): net.train() trainer.zero_grad() X, y = X.to(device), y.to(device) y_hat = net(X) l = loss(y_hat, y) l.backward() trainer.step() with torch.no_grad(): metric.add(l * X.shape[0], d2l.accuracy(y_hat, y), X.shape[0]) train_loss = metric[0] / metric[2] train_acc = metric[1] / metric[2] if (i + 1) % 50 == 0: animator.add(epoch + i / len(train_iter), (train_loss, train_acc, None)) test_acc = d2l.evaluate_accuracy_gpu(net, test_iter) animator.add(epoch+1, (None, None, test_acc)) if scheduler: if scheduler.__module__ == lr_scheduler.__name__: # Using PyTorch In-Built scheduler scheduler.step() else: # Using custom defined scheduler for param_group in trainer.param_groups: param_group['lr'] = scheduler(epoch) print(f'train loss {train_loss:.3f}, train acc {train_acc:.3f}, ' f'test acc {test_acc:.3f}')

%matplotlib inline

from mxnet import autograd, gluon, init, lr_scheduler, np, npx

from mxnet.gluon import nn

from d2l import mxnet as d2l

npx.set_np()

net = nn.HybridSequential()

net.add(nn.Conv2D(channels=6, kernel_size=5, padding=2, activation='relu'),

nn.MaxPool2D(pool_size=2, strides=2),

nn.Conv2D(channels=16, kernel_size=5, activation='relu'),

nn.MaxPool2D(pool_size=2, strides=2),

nn.Dense(120, activation='relu'),

nn.Dense(84, activation='relu'),

nn.Dense(10))

net.hybridize()

loss = gluon.loss.SoftmaxCrossEntropyLoss()

device = d2l.try_gpu()

batch_size = 256

train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size=batch_size)

# The code is almost identical to `d2l.train_ch6` defined in the

# lenet section of chapter convolutional neural networks

def train(net, train_iter, test_iter, num_epochs, loss, trainer, device):

net.initialize(force_reinit=True, ctx=device, init=init.Xavier())

animator = d2l.Animator(xlabel='epoch', xlim=[0, num_epochs],

legend=['train loss', 'train acc', 'test acc'])

for epoch in range(num_epochs):

metric = d2l.Accumulator(3) # train_loss, train_acc, num_examples

for i, (X, y) in enumerate(train_iter):

X, y = X.as_in_ctx(device), y.as_in_ctx(device)

with autograd.record():

y_hat = net(X)

l = loss(y_hat, y)

l.backward()

trainer.step(X.shape[0])

metric.add(l.sum(), d2l.accuracy(y_hat, y), X.shape[0])

train_loss = metric[0] / metric[2]

train_acc = metric[1] / metric[2]

if (i + 1) % 50 == 0:

animator.add(epoch + i / len(train_iter),

(train_loss, train_acc, None))

test_acc = d2l.evaluate_accuracy_gpu(net, test_iter)

animator.add(epoch + 1, (None, None, test_acc))

print(f'train loss {train_loss:.3f}, train acc {train_acc:.3f}, '

f'test acc {test_acc:.3f}')

%matplotlib inline import math import tensorflow as tf from tensorflow.keras.callbacks import LearningRateScheduler from d2l import tensorflow as d2l def net(): return tf.keras.models.Sequential([ tf.keras.layers.Conv2D(filters=6, kernel_size=5, activation='relu', padding='same'), tf.keras.layers.AvgPool2D(pool_size=2, strides=2), tf.keras.layers.Conv2D(filters=16, kernel_size=5, activation='relu'), tf.keras.layers.AvgPool2D(pool_size=2, strides=2), tf.keras.layers.Flatten(), tf.keras.layers.Dense(120, activation='relu'), tf.keras.layers.Dense(84, activation='sigmoid'), tf.keras.layers.Dense(10)]) batch_size = 256 train_iter, test_iter = d2l.load_data_fashion_mnist(batch_size=batch_size) # The code is almost identical to `d2l.train_ch6` defined in the # lenet section of chapter convolutional neural networks def train(net_fn, train_iter, test_iter, num_epochs, lr, device=d2l.try_gpu(), custom_callback = False): device_name = device._device_name strategy = tf.distribute.OneDeviceStrategy(device_name) with strategy.scope(): optimizer = tf.keras.optimizers.SGD(learning_rate=lr) loss = tf.keras.losses.SparseCategoricalCrossentropy(from_logits=True) net = net_fn() net.compile(optimizer=optimizer, loss=loss, metrics=['accuracy']) callback = d2l.TrainCallback(net, train_iter, test_iter, num_epochs, device_name) if custom_callback is False: net.fit(train_iter, epochs=num_epochs, verbose=0, callbacks=[callback]) else: net.fit(train_iter, epochs=num_epochs, verbose=0, callbacks=[callback, custom_callback]) return net

WARNING:tensorflow:From /home/d2l-worker/miniconda3/envs/d2l-en-release-1/lib/python3.9/site-packages/tensorflow/python/autograph/pyct/static_analysis/liveness.py:83: Analyzer.lamba_check (from tensorflow.python.autograph.pyct.static_analysis.liveness) is deprecated and will be removed after 2023-09-23. Instructions for updating: Lambda fuctions will be no more assumed to be used in the statement where they are used, or at least in the same block. https://github.com/tensorflow/tensorflow/issues/56089

让我们看看如果我们使用默认设置调用此算法会发生什么,例如学习率为0.3并训练 30迭代。请注意训练准确性如何不断提高,而测试准确性方面的进展却停滞不前。两条曲线之间的差距表明过度拟合。

lr, num_epochs = 0.3, 30 net = net_fn() trainer = torch.optim.SGD(net.parameters(), lr=lr) train(net, train_iter, test_iter, num_epochs, loss, trainer, device)

train loss 0.159, train acc 0.939, test acc 0.882

lr, num_epochs = 0.3, 30

net.initialize(force_reinit=True, ctx=device, init=init.Xavier())

trainer = gluon.Trainer(net.collect_params(), 'sgd', {'learning_rate': lr})

train(net, train_iter, test_iter, num_epochs, loss, trainer, device)

train loss 0.135, train acc 0.948, test acc 0.885

lr, num_epochs = 0.3, 30 train(net, train_iter, test_iter, num_epochs, lr)

loss 0.218, train acc 0.918, test acc 0.889 46772.7 examples/sec on /GPU:0

12.11.2。调度程序

调整学习率的一种方法是在每一步明确设置它。这通过该set_learning_rate方法方便地实现。我们可以在每个 epoch 之后(甚至在每个 minibatch 之后)向下调整它,例如,以动态方式响应优化的进展情况。

lr = 0.1

trainer.param_groups[0]["lr"] = lr

print(f'learning rate is now {trainer.param_groups[0]["lr"]:.2f}')

learning rate is now 0.10

trainer.set_learning_rate(0.1)

print(f'learning rate is now {trainer.learning_rate:.2f}')

learning rate is now 0.10

lr = 0.1 dummy_model = tf.keras.models.Sequential([tf.keras.layers.Dense(10)]) dummy_model.compile(tf.keras.optimizers.SGD(learning_rate=lr), loss='mse') print(f'learning rate is now ,', dummy_model.optimizer.lr.numpy())

learning rate is now , 0.1

更一般地说,我们想要定义一个调度程序。当使用更新次数调用时,它会返回适当的学习率值。让我们定义一个简单的学习率设置为 η=η0(t+1)−12.

class SquareRootScheduler:

def __init__(self, lr=0.1):

self.lr = lr

def __call__(self, num_update):

return self.lr * pow(num_update + 1.0, -0.5)

class SquareRootScheduler:

def __init__(self, lr=0.1):

self.lr = lr

def __call__(self, num_update):

return self.lr * pow(num_update + 1.0, -0.5)

class SquareRootScheduler:

def __init__(self, lr=0.1):

self.lr = lr

def __call__(self, num_update):

return self.lr * pow(num_update + 1.0, -0.5)

让我们绘制它在一系列值上的行为。

scheduler = SquareRootScheduler(lr=0.1) d2l.plot(torch.arange(num_epochs), [scheduler(t) for t in range(num_epochs)])

scheduler = SquareRootScheduler(lr=0.1) d2l.plot(np.arange(num_epochs), [scheduler(t) for t in range(num_epochs)])

scheduler = SquareRootScheduler(lr=0.1) d2l.plot(tf.range(num_epochs), [scheduler(t) for t in range(num_epochs)])

现在让我们看看这对 Fashion-MNIST 的训练有何影响。我们只是将调度程序作为训练算法的附加参数提供。

net = net_fn() trainer = torch.optim.SGD(net.parameters(), lr) train(net, train_iter, test_iter, num_epochs, loss, trainer, device, scheduler)

train loss 0.284, train acc 0.896, test acc 0.874

trainer = gluon.Trainer(net.collect_params(), 'sgd',

{'lr_scheduler': scheduler})

train(net, train_iter, test_iter, num_epochs, loss, trainer, device)

train loss 0.524, train acc 0.810, test acc 0.812

train(net, train_iter, test_iter, num_epochs, lr, custom_callback=LearningRateScheduler(scheduler))

loss 0.381, train acc 0.860, test acc 0.848 49349.5 examples/sec on /GPU:0

这比以前好很多。有两点很突出:曲线比以前更平滑。其次,过度拟合较少。不幸的是,关于为什么某些策略在理论上会导致较少的过度拟合,这并不是一个很好解决的问题。有人认为较小的步长会导致参数更接近于零,从而更简单。然而,这并不能完全解释这种现象,因为我们并没有真正提前停止,而只是轻轻地降低学习率。

12.11.3。政策

虽然我们不可能涵盖所有种类的学习率调度器,但我们尝试在下面简要概述流行的策略。常见的选择是多项式衰减和分段常数计划。除此之外,已发现余弦学习率计划在某些问题上凭经验表现良好。最后,在某些问题上,在使用大学习率之前预热优化器是有益的。

12.11.3.1。因子调度器

多项式衰减的一种替代方法是乘法衰减,即ηt+1←ηt⋅α为了 α∈(0,1). 为了防止学习率衰减超过合理的下限,更新方程通常被修改为 ηt+1←max(ηmin,ηt⋅α).

class FactorScheduler:

def __init__(self, factor=1, stop_factor_lr=1e-7, base_lr=0.1):

self.factor = factor

self.stop_factor_lr = stop_factor_lr

self.base_lr = base_lr

def __call__(self, num_update):

self.base_lr = max(self.stop_factor_lr, self.base_lr * self.factor)

return self.base_lr

scheduler = FactorScheduler(factor=0.9, stop_factor_lr=1e-2, base_lr=2.0)

d2l.plot(torch.arange(50), [scheduler(t) for t in range(50)])

class FactorScheduler:

def __init__(self, factor=1, stop_factor_lr=1e-7, base_lr=0.1):

self.factor = factor

self.stop_factor_lr = stop_factor_lr

self.base_lr = base_lr

def __call__(self, num_update):

self.base_lr = max(self.stop_factor_lr, self.base_lr * self.factor)

return self.base_lr

scheduler = FactorScheduler(factor=0.9, stop_factor_lr=1e-2, base_lr=2.0)

d2l.plot(np.arange(50), [scheduler(t) for t in range(50)])

class FactorScheduler:

def __init__(self, factor=1, stop_factor_lr=1e-7, base_lr=0.1):

self.factor = factor

self.stop_factor_lr = stop_factor_lr

self.base_lr = base_lr

def __call__(self, num_update):

self.base_lr = max(self.stop_factor_lr, self.base_lr * self.factor)

return self.base_lr

scheduler = FactorScheduler(factor=0.9, stop_factor_lr=1e-2, base_lr=2.0)

d2l.plot(tf.range(50), [scheduler(t) for t in range(50)])

这也可以通过 MXNet 中的内置调度程序通过 lr_scheduler.FactorScheduler对象来完成。它需要更多的参数,例如预热周期、预热模式(线性或恒定)、所需更新的最大数量等;展望未来,我们将酌情使用内置调度程序,并仅在此处解释它们的功能。如图所示,如果需要,构建您自己的调度程序相当简单。

12.11.3.2。多因素调度程序

训练深度网络的一个常见策略是保持学习率分段不变,并每隔一段时间将其降低一个给定的数量。也就是说,给定一组降低速率的时间,例如 s={5,10,20}减少 ηt+1←ηt⋅α每当 t∈s. 假设值在每一步都减半,我们可以按如下方式实现。

net = net_fn()

trainer = torch.optim.SGD(net.parameters(), lr=0.5)

scheduler = lr_scheduler.MultiStepLR(trainer, milestones=[15, 30], gamma=0.5)

def get_lr(trainer, scheduler):

lr = scheduler.get_last_lr()[0]

trainer.step()

scheduler.step()

return lr

d2l.plot(torch.arange(num_epochs), [get_lr(trainer, scheduler)

for t in range(num_epochs)])

scheduler = lr_scheduler.MultiFactorScheduler(step=[15, 30], factor=0.5,

base_lr=0.5)

d2l.plot(np.arange(num_epochs), [scheduler(t) for t in range(num_epochs)])

class MultiFactorScheduler:

def __init__(self, step, factor, base_lr):

self.step = step

self.factor = factor

self.base_lr = base_lr

def __call__(self, epoch):

if epoch in self.step:

self.base_lr = self.base_lr * self.factor

return self.base_lr

else:

return self.base_lr

scheduler = MultiFactorScheduler(step=[15, 30], factor=0.5, base_lr=0.5)

d2l.plot(tf.range(num_epochs), [scheduler(t) for t in range(num_epochs)])

这种分段恒定学习率计划背后的直觉是,让优化继续进行,直到根据权重向量的分布达到稳定点。然后(也只有那时)我们会降低速率以获得更高质量的代理到良好的局部最小值。下面的例子展示了这如何产生更好的解决方案。

train(net, train_iter, test_iter, num_epochs, loss, trainer, device, scheduler)

train loss 0.186, train acc 0.931, test acc 0.897

trainer = gluon.Trainer(net.collect_params(), 'sgd',

{'lr_scheduler': scheduler})

train(net, train_iter, test_iter, num_epochs, loss, trainer, device)

train loss 0.195, train acc 0.926, test acc 0.893

train(net, train_iter, test_iter, num_epochs, lr, custom_callback=LearningRateScheduler(scheduler))

loss 0.237, train acc 0.913, test acc 0.882 49476.3 examples/sec on /GPU:0

12.11.3.3。余弦调度程序

Loshchilov 和 Hutter ( 2016 )提出了一种相当令人费解的启发式方法 。它依赖于这样的观察,即我们可能不想在开始时过分降低学习率,而且我们可能希望最终使用非常小的学习率来“完善”解决方案。这导致类似余弦的时间表具有以下范围内学习率的函数形式t∈[0,T].

(12.11.1)ηt=ηT+η0−ηT2(1+cos(πt/T))

这里η0是初始学习率,ηT是当时的目标利率T. 此外,对于t>T我们只需将值固定到ηT无需再次增加。在下面的例子中,我们设置最大更新步长T=20.

class CosineScheduler:

def __init__(self, max_update, base_lr=0.01, final_lr=0,

warmup_steps=0, warmup_begin_lr=0):

self.base_lr_orig = base_lr

self.max_update = max_update

self.final_lr = final_lr

self.warmup_steps = warmup_steps

self.warmup_begin_lr = warmup_begin_lr

self.max_steps = self.max_update - self.warmup_steps

def get_warmup_lr(self, epoch):

increase = (self.base_lr_orig - self.warmup_begin_lr)

* float(epoch) / float(self.warmup_steps)

return self.warmup_begin_lr + increase

def __call__(self, epoch):

if epoch < self.warmup_steps:

return self.get_warmup_lr(epoch)

if epoch <= self.max_update:

self.base_lr = self.final_lr + (

self.base_lr_orig - self.final_lr) * (1 + math.cos(

math.pi * (epoch - self.warmup_steps) / self.max_steps)) / 2

return self.base_lr

scheduler = CosineScheduler(max_update=20, base_lr=0.3, final_lr=0.01)

d2l.plot(torch.arange(num_epochs), [scheduler(t) for t in range(num_epochs)])

scheduler = lr_scheduler.CosineScheduler(max_update=20, base_lr=0.3,

final_lr=0.01)

d2l.plot(np.arange(num_epochs), [scheduler(t) for t in range(num_epochs)])

class CosineScheduler:

def __init__(self, max_update, base_lr=0.01, final_lr=0,

warmup_steps=0, warmup_begin_lr=0):

self.base_lr_orig = base_lr

self.max_update = max_update

self.final_lr = final_lr

self.warmup_steps = warmup_steps

self.warmup_begin_lr = warmup_begin_lr

self.max_steps = self.max_update - self.warmup_steps

def get_warmup_lr(self, epoch):

increase = (self.base_lr_orig - self.warmup_begin_lr)

* float(epoch) / float(self.warmup_steps)

return self.warmup_begin_lr + increase

def __call__(self, epoch):

if epoch < self.warmup_steps:

return self.get_warmup_lr(epoch)

if epoch <= self.max_update:

self.base_lr = self.final_lr + (

self.base_lr_orig - self.final_lr) * (1 + math.cos(

math.pi * (epoch - self.warmup_steps) / self.max_steps)) / 2

return self.base_lr

scheduler = CosineScheduler(max_update=20, base_lr=0.3, final_lr=0.01)

d2l.plot(tf.range(num_epochs), [scheduler(t) for t in range(num_epochs)])

在计算机视觉的背景下,这个时间表可以带来更好的结果。但请注意,不能保证此类改进(如下所示)。

net = net_fn() trainer = torch.optim.SGD(net.parameters(), lr=0.3) train(net, train_iter, test_iter, num_epochs, loss, trainer, device, scheduler)

train loss 0.229, train acc 0.916, test acc 0.886

trainer = gluon.Trainer(net.collect_params(), 'sgd',

{'lr_scheduler': scheduler})

train(net, train_iter, test_iter, num_epochs, loss, trainer, device)

train loss 0.345, train acc 0.876, test acc 0.866

train(net, train_iter, test_iter, num_epochs, lr, custom_callback=LearningRateScheduler(scheduler))

loss 0.261, train acc 0.905, test acc 0.881 49572.1 examples/sec on /GPU:0

12.11.3.4。暖身

在某些情况下,初始化参数不足以保证获得良好的解决方案。对于一些可能导致不稳定的优化问题的高级网络设计来说,这尤其是一个问题。我们可以通过选择足够小的学习率来解决这个问题,以防止在开始时出现分歧。不幸的是,这意味着进展缓慢。相反,较大的学习率最初会导致发散。

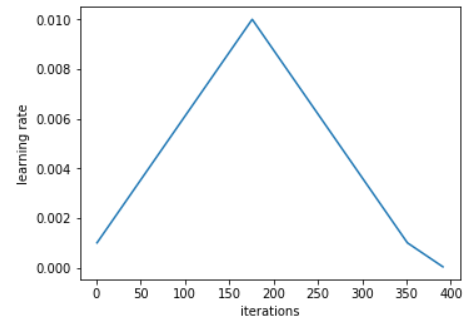

解决这个难题的一个相当简单的方法是使用一个预热期,在此期间学习率增加到其初始最大值,并降低学习率直到优化过程结束。为简单起见,通常为此目的使用线性增加。这导致了如下所示表格的时间表。

scheduler = CosineScheduler(20, warmup_steps=5, base_lr=0.3, final_lr=0.01) d2l.plot(torch.arange(num_epochs), [scheduler(t) for t in range(num_epochs)])

scheduler = lr_scheduler.CosineScheduler(20, warmup_steps=5, base_lr=0.3,

final_lr=0.01)

d2l.plot(np.arange(num_epochs), [scheduler(t) for t in range(num_epochs)])

scheduler = CosineScheduler(20, warmup_steps=5, base_lr=0.3, final_lr=0.01) d2l.plot(tf.range(num_epochs), [scheduler(t) for t in range(num_epochs)])

请注意,网络最初收敛得更好(特别是观察前 5 个时期的表现)。

net = net_fn() trainer = torch.optim.SGD(net.parameters(), lr=0.3) train(net, train_iter, test_iter, num_epochs, loss, trainer, device, scheduler)

train loss 0.173, train acc 0.936, test acc 0.902

trainer = gluon.Trainer(net.collect_params(), 'sgd',

{'lr_scheduler': scheduler})

train(net, train_iter, test_iter, num_epochs, loss, trainer, device)

train loss 0.347, train acc 0.875, test acc 0.871

train(net, train_iter, test_iter, num_epochs, lr, custom_callback=LearningRateScheduler(scheduler))

loss 0.270, train acc 0.901, test acc 0.880 49891.2 examples/sec on /GPU:0

预热可以应用于任何调度程序(不仅仅是余弦)。有关学习率计划和更多实验的更详细讨论,另请参阅(Gotmare等人,2018 年)。特别是,他们发现预热阶段限制了非常深的网络中参数的发散量。这在直觉上是有道理的,因为我们预计由于网络中那些在开始时花费最多时间取得进展的部分的随机初始化会出现显着差异。

12.11.4。概括

在训练期间降低学习率可以提高准确性并(最令人费解的是)减少模型的过度拟合。

每当进展趋于平稳时,学习率的分段降低在实践中是有效的。从本质上讲,这可以确保我们有效地收敛到一个合适的解决方案,然后才通过降低学习率来减少参数的固有方差。

余弦调度器在某些计算机视觉问题上很受欢迎。有关此类调度程序的详细信息,请参见例如GluonCV 。

优化前的预热期可以防止发散。

优化在深度学习中有多种用途。除了最小化训练目标外,优化算法和学习率调度的不同选择可能导致测试集上的泛化和过度拟合量大不相同(对于相同数量的训练误差)。

12.11.5。练习

试验给定固定学习率的优化行为。您可以通过这种方式获得的最佳模型是什么?

如果改变学习率下降的指数,收敛性会如何变化?PolyScheduler为了方便您在实验中使用。

将余弦调度程序应用于大型计算机视觉问题,例如训练 ImageNet。相对于其他调度程序,它如何影响性能?

热身应该持续多长时间?

你能把优化和抽样联系起来吗?首先使用Welling 和 Teh ( 2011 )关于随机梯度朗之万动力学的结果。

-

pytorch

+关注

关注

2文章

808浏览量

13240

发布评论请先 登录

相关推荐

Pytorch入门教程与范例

PyTorch教程-12.11。学习率调度

PyTorch教程-12.11。学习率调度

评论