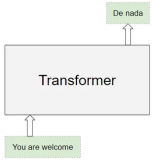

8 个新的架构!这个版本支持了很多新的多模态架构,能够支持的架构总数达到了 80 个!1.支持超过 1000 种语言的多语种文本转语音的 VITS!(#466)

import { pipeline } from '@xenova/transformers';

// Create English text-to-speech pipeline

const synthesizer = await pipeline('text-to-speech', 'Xenova/mms-tts-eng');

// Generate speech

const output = await synthesizer('I love transformers');

// {

// audio: Float32Array(26112) [...],

// sampling_rate: 16000

// }

请参阅此处了解可用模型的列表。首先,我们在 Hugging Face Hub 上转换了约 1140 个模型中的 12 个。如果其中没有你想要的,可以使用我们的转换脚本自行转换。2. CLIPSeg 用于零样本图像分割。(#478)

import { AutoTokenizer, AutoProcessor, CLIPSegForImageSegmentation, RawImage } from '@xenova/transformers';

// Load tokenizer, processor, and model

const tokenizer = await AutoTokenizer.from_pretrained('Xenova/clipseg-rd64-refined');

const processor = await AutoProcessor.from_pretrained('Xenova/clipseg-rd64-refined');

const model = await CLIPSegForImageSegmentation.from_pretrained('Xenova/clipseg-rd64-refined');

// Run tokenization

const texts = ['a glass', 'something to fill', 'wood', 'a jar'];

const text_inputs = tokenizer(texts, { padding: true, truncation: true });

// Read image and run processor

const image = await RawImage.read('https://github.com/timojl/clipseg/blob/master/example_image.jpg?raw=true');

const image_inputs = await processor(image);

// Run model with both text and pixel inputs

const { logits } = await model({ ...text_inputs, ...image_inputs });

// logits: Tensor {

// dims: [4, 352, 352],

// type: 'float32',

// data: Float32Array(495616)[ ... ],

// size: 495616

// }

您可以按如下方式可视化预测结果:

const preds = logits

.unsqueeze_(1)

.sigmoid_()

.mul_(255)

.round_()

.to('uint8');

for (let i = 0; i < preds.dims[0]; ++i) {

const img = RawImage.fromTensor(preds[i]);

img.save(`prediction_${i}.png`);

}

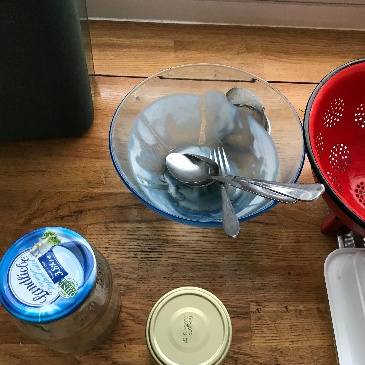

| Original |

"a glass" |

"something to fill" |

"wood" |

"a jar" |

|---|---|---|---|---|

|

|

|

|

|

请查看此处以获取可用模型列表。

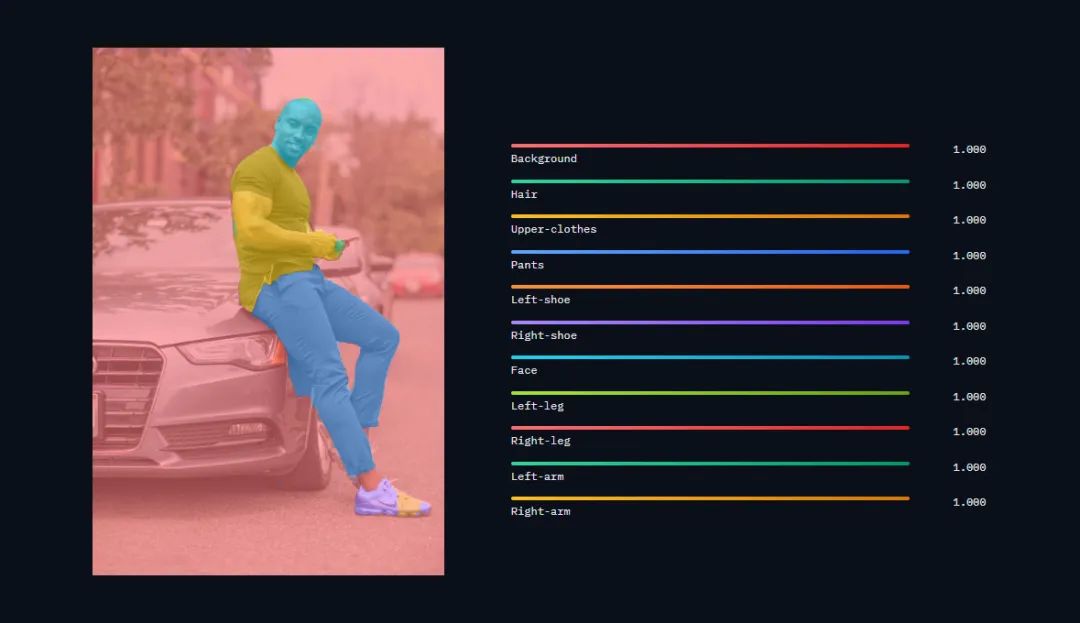

3. SegFormer 用于语义分割和图像分类。(#480)

import { pipeline } from '@xenova/transformers';

// Create an image segmentation pipeline

const segmenter = await pipeline('image-segmentation', 'Xenova/segformer_b2_clothes');

// Segment an image

const url = 'https://huggingface.co/datasets/Xenova/transformers.js-docs/resolve/main/young-man-standing-and-leaning-on-car.jpg';

const output = await segmenter(url);

4. Table Transformer 用于从非结构化文档中提取表格。(#477)

import { pipeline } from '@xenova/transformers';

// Create an object detection pipeline

const detector = await pipeline('object-detection', 'Xenova/table-transformer-detection', { quantized: false });

// Detect tables in an image

const img = 'https://huggingface.co/datasets/Xenova/transformers.js-docs/resolve/main/invoice-with-table.png';

const output = await detector(img);

// [{ score: 0.9967531561851501, label: 'table', box: { xmin: 52, ymin: 322, xmax: 546, ymax: 525 } }]

5. DiT用于文档图像分类。(#474)

import { pipeline } from '@xenova/transformers';

// Create an image classification pipeline

const classifier = await pipeline('image-classification', 'Xenova/dit-base-finetuned-rvlcdip');

// Classify an image

const url = 'https://huggingface.co/datasets/Xenova/transformers.js-docs/resolve/main/coca_cola_advertisement.png';

const output = await classifier(url);

// [{ label: 'advertisement', score: 0.9035086035728455 }]

6. SigLIP用于零样本图像分类。(#473)

import { pipeline } from '@xenova/transformers';

// Create a zero-shot image classification pipeline

const classifier = await pipeline('zero-shot-image-classification', 'Xenova/siglip-base-patch16-224');

// Classify images according to provided labels

const url = 'http://images.cocodataset.org/val2017/000000039769.jpg';

const output = await classifier(url, ['2 cats', '2 dogs'], {

hypothesis_template: 'a photo of {}',

});

// [

// { score: 0.16770583391189575, label: '2 cats' },

// { score: 0.000022096000975579955, label: '2 dogs' }

// ]

7. RoFormer 用于蒙版语言建模、序列分类、标记分类和问题回答。(#464)

import { pipeline } from '@xenova/transformers';

// Create a masked language modelling pipeline

const pipe = await pipeline('fill-mask', 'Xenova/antiberta2');

// Predict missing token

const output = await pipe('Ḣ Q V Q ... C A [MASK] D ... T V S S');

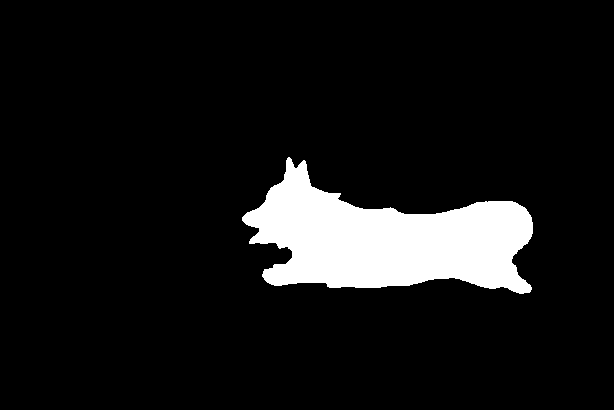

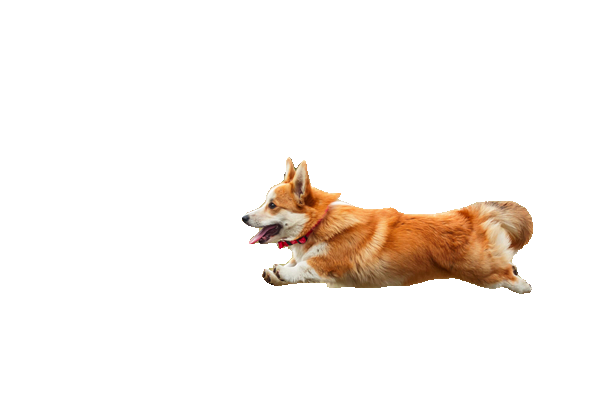

8.分段任意模型 (SAM)

分段任意模型(SAM)可以在给定输入图像和输入点的情况下,用于生成场景中对象的分割蒙版。请查看此处以获取完整的预转换模型列表。对该模型的支持已在#510中添加。

例子+源码:https://huggingface.co/spaces/Xenova/segment-anything-web

示例:使用 Xenova/slimsam-77-uniform 执行掩模生成。

import { SamModel, AutoProcessor, RawImage } from '@xenova/transformers';

const model = await SamModel.from_pretrained('Xenova/slimsam-77-uniform');

const processor = await AutoProcessor.from_pretrained('Xenova/slimsam-77-uniform');

const img_url = 'https://huggingface.co/datasets/Xenova/transformers.js-docs/resolve/main/corgi.jpg';

const raw_image = await RawImage.read(img_url);

const input_points = [[[340, 250]]] // 2D localization of a window

const inputs = await processor(raw_image, input_points);

const outputs = await model(inputs);

const masks = await processor.post_process_masks(outputs.pred_masks, inputs.original_sizes, inputs.reshaped_input_sizes);

console.log(masks);

// [

// Tensor {

// dims: [ 1, 3, 410, 614 ],

// type: 'bool',

// data: Uint8Array(755220) [ ... ],

// size: 755220

// }

// ]

const scores = outputs.iou_scores;

console.log(scores);

// Tensor {

// dims: [ 1, 1, 3 ],

// type: 'float32',

// data: Float32Array(3) [

// 0.8350210189819336,

// 0.9786665439605713,

// 0.8379436731338501

// ],

// size: 3

// }

这样可以将这三个预测蒙板可视化:

const image = RawImage.fromTensor(masks[0][0].mul(255));

image.save('mask.png');

| Input image | Visualized output |

|---|---|

|

|

|

接下来,选择 IoU 分数最高的通道,在本例中是第二个(绿色)通道。将其与原始图像相交,我们得到了该主题的孤立版本:

| Selected Mask | Intersected |

|---|---|

|

|

|

其他改进

-

修复了@Lian1230在#461中提交的关于Next.js Dockerfile的HOSTNAME 问题。

-

在#467中,在 README 中添加了空模板的链接。

-

在 #503 中添加对使用 ConvNextFeatureExtractor 处理非方形图像的支持

-

通过 #507 对远程 URL 中的修订进行编码

-

@Lian1230 在 #461 中进行了他们的首次贡献。

改进#485中的pipeline函数的类型。感谢@wesbos提出的建议!

意味着当您将鼠标悬停在类名称上时,您将获得示例代码来帮助您。

此版本是 #485 的后续版本,具有额外的以智能感知为中心的改进(请参阅 PR)。

添加对跨编码器模型的支持(+修复令牌类型 ID)(#501)

示例:使用 Xenova/ms-marco-TinyBERT-L-2-v2 进行信息检索。

import { AutoTokenizer, AutoModelForSequenceClassification } from '@xenova/transformers';

const model = await AutoModelForSequenceClassification.from_pretrained('Xenova/ms-marco-TinyBERT-L-2-v2');

const tokenizer = await AutoTokenizer.from_pretrained('Xenova/ms-marco-TinyBERT-L-2-v2');

const features = tokenizer(

['How many people live in Berlin?', 'How many people live in Berlin?'],

{

text_pair: [

'Berlin has a population of 3,520,031 registered inhabitants in an area of 891.82 square kilometers.',

'New York City is famous for the Metropolitan Museum of Art.',

],

padding: true,

truncation: true,

}

)

const { logits } = await model(features)

console.log(logits.data);

// quantized: [ 7.210887908935547, -11.559350967407227 ]

// unquantized: [ 7.235750675201416, -11.562294006347656 ]

-

源码

+关注

关注

8文章

645浏览量

29273 -

模型

+关注

关注

1文章

3259浏览量

48907 -

架构

+关注

关注

1文章

516浏览量

25494

原文标题:Transformers.js 2.13、2.14 发布,新增 8 个新的架构

文章出处:【微信号:vision263com,微信公众号:新机器视觉】欢迎添加关注!文章转载请注明出处。

发布评论请先 登录

相关推荐

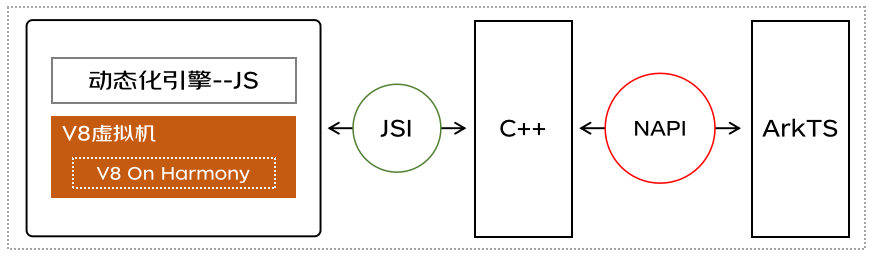

鸿蒙跨端实践-JS虚拟机架构实现

使用基于Transformers的API在CPU上实现LLM高效推理

用户管理-动态调用VI(新增用户插件)

OpenHarmony 3.0 LTS 新增特性功能

94个JS/eTS开源组件首发上新,肯定有你要用的一款!

HarmonyOS 3.0 Beta版本说明

面向开发者的HarmonyOS 3.0 Beta发布

OpenHarmony 3.2 Beta2 版本发布:支持电源管理重启恢复机制等

DevEco Studio 3.1 Beta1版本发布——新增六大关键特性,开发更高效

BJDEEN PULSE TRANSFORMERS

node.js的js要点总结

GPU-Z 2.26.0正式发布 新增对部分假冒显卡核心的支持

安徽省已累计建设完成5G基站2.14万个

Transformers的功能概述

Transformers.js 2.13、2.14 发布,新增8个新的架构

Transformers.js 2.13、2.14 发布,新增8个新的架构

评论